On Jan. 29, U.S.-based Wiz Analysis introduced it responsibly disclosed a DeepSeek database beforehand open to the general public, exposing chat logs and different delicate data. DeepSeek locked down the database, however the discovery highlights attainable dangers with generative AI fashions, notably worldwide initiatives.

DeepSeek shook up the tech business over the past week because the Chinese language firm’s AI fashions rivaled American generative AI leaders. Particularly, DeepSeek’s R1 competes with OpenAI o1 on some benchmarks.

How did Wiz Analysis uncover DeepSeek’s public database?

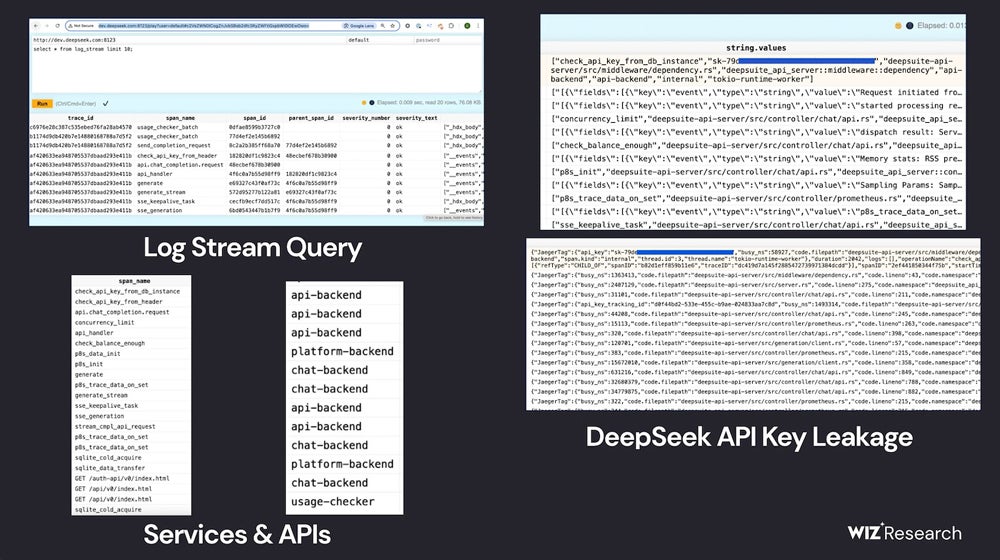

In a weblog publish disclosing Wiz Analysis’s work, cloud safety researcher Gal Nagli detailed how the crew discovered a publicly accessible ClickHouse database belonging to DeepSeek. The database opened up potential paths for management of the database and privilege escalation assaults. Contained in the database, Wiz Analysis might learn chat historical past, backend information, log streams, API Secrets and techniques, and operational particulars.

The crew discovered the ClickHouse database “inside minutes” as they assessed DeepSeek’s potential vulnerabilities.

“We have been shocked, and in addition felt an incredible sense of urgency to behave quick, given the magnitude of the invention,” Nagli mentioned in an electronic mail to TechRepublic.

They first assessed DeepSeek’s internet-facing subdomains, and two open ports struck them as uncommon; these ports result in DeepSeek’s database hosted on ClickHouse, the open-source database administration system. By searching the tables in ClickHouse, Wiz Analysis discovered chat historical past, API keys, operational metadata, and extra.

The Wiz Analysis crew famous they didn’t “execute intrusive queries” throughout the exploration course of, per moral analysis practices.

What does the publicly obtainable database imply for DeepSeek’s AI?

Wiz Analysis knowledgeable DeepSeek of the breach and the AI firm locked down the database; subsequently, DeepSeek AI merchandise shouldn’t be affected.

Nonetheless, the likelihood that the database might have remained open to attackers highlights the complexity of securing generative AI merchandise.

“Whereas a lot of the eye round AI safety is concentrated on futuristic threats, the actual risks typically come from fundamental dangers—like unintended exterior publicity of databases,” Nagli wrote in a weblog publish.

IT professionals ought to concentrate on the hazards of adopting new and untested merchandise, particularly generative AI, too shortly — give researchers time to search out bugs and flaws within the programs. If attainable, embrace cautious timelines in firm generative AI use insurance policies.

SEE: Defending and securing information has grow to be extra sophisticated within the days of generative AI.

“As organizations rush to undertake AI instruments and companies from a rising variety of startups and suppliers, it’s important to keep in mind that by doing so, we’re entrusting these firms with delicate information,” Nagli mentioned.

Relying in your location, IT crew members would possibly want to concentrate on laws or safety considerations which will apply to generative AI fashions originating in China.

“For instance, sure details in China’s historical past or previous aren’t offered by the fashions transparently or absolutely,” famous Unmesh Kulkarni, head of gen AI at information science agency Tredence, in an electronic mail to TechRepublic. “The information privateness implications of calling the hosted mannequin are additionally unclear and most world firms wouldn’t be keen to do this. Nonetheless, one ought to keep in mind that DeepSeek fashions are open-source and may be deployed regionally inside an organization’s non-public cloud or community surroundings. This might handle the info privateness points or leakage considerations.”

Nagli additionally beneficial self-hosted fashions when TechRepublic reached him by electronic mail.

“Implementing strict entry controls, information encryption, and community segmentation can additional mitigate dangers,” he wrote. “Organizations ought to guarantee they’ve visibility and governance of all the AI stack to allow them to analyze all dangers, together with utilization of malicious fashions, publicity of coaching information, delicate information in coaching, vulnerabilities in AI SDKs, publicity of AI companies, and different poisonous threat mixtures which will exploited by attackers.”